Scalable cloud architecture gears MYND up for the commercial market

Kapernikov offers a unique combination of expertise: they know AI and data science, and they can hel ...

Machine vision

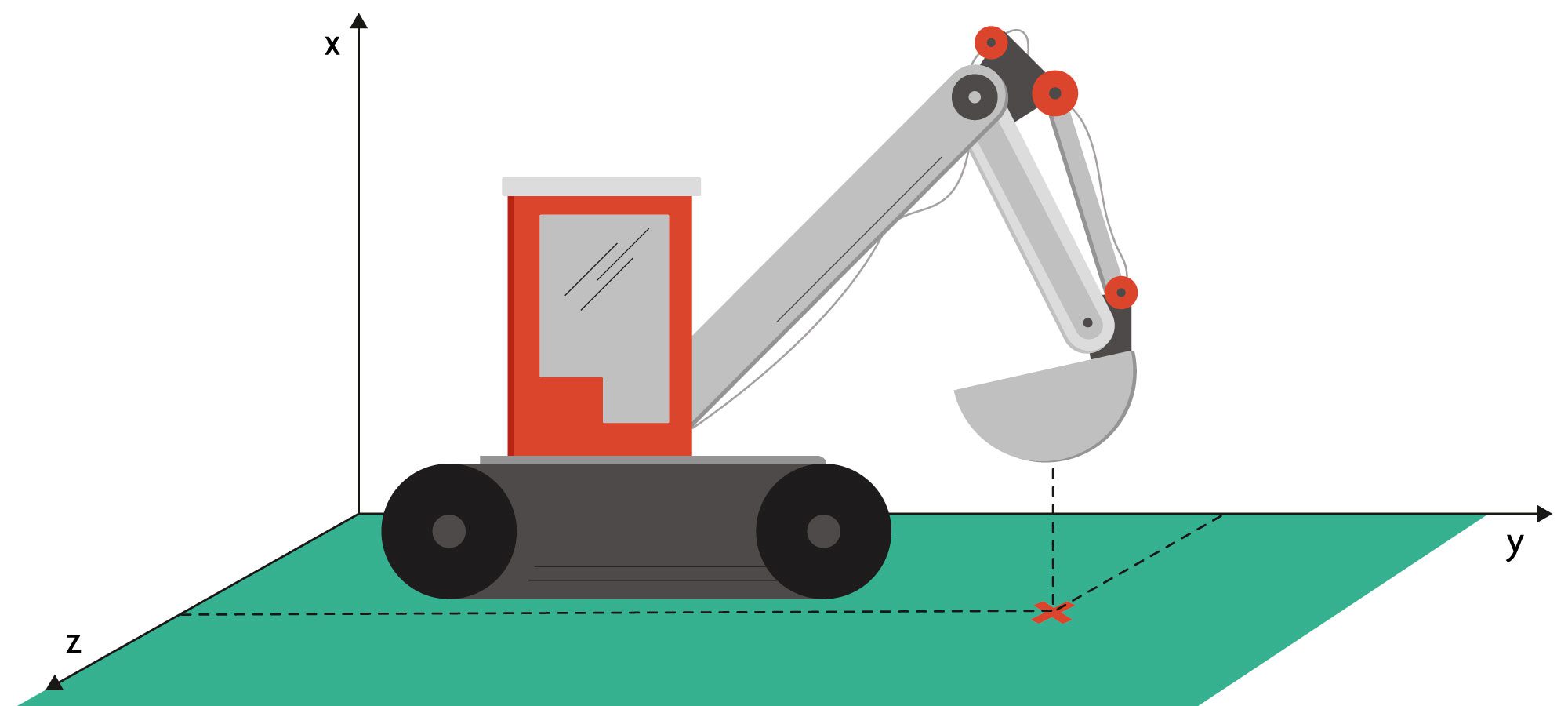

When it comes to logging the exact trajectory of an excavator, there are several solutions on the market. Kapernikov applies its expertise in computer vision and machine learning to track machine movements with speed and precision.

In many situations, it can be very useful to know how to track the movements of an excavator in the most optimal way. For example, when your machine operator needs feedback on the right spot to drop off his load. Or if you want to monitor the deposited volumes and build up a 3D model of the displaced mass.

This challenge is not new, and there are several solutions available already. One way of approaching this is to use encoders on the excavator arm that measure the exact position of each joint, and with these data, calculate back the end effector position. However, this solution is tricky as it is not without risk: the encoders are fragile and could easily be damaged as the excavator arm and the bucket move around.

Another existing way of handling things could be to measure the hydraulic pressure during the operation and use those data to reconstruct the excavator’s trajectory. This method makes for a more robust solution, but then again, it can be quite error prone if we do not take into account high dynamic loads during digging.

This is a challenge that Kapernikov loves to take up, as positioning objects is second nature to us. We gathered the speed and precision requirements and set out to analyze the alternatives.

At first, we narrowed our search down to Ultra Wide Band (UWB) positioning. First, a beacon has to be mounted on the end effector in a rugged fashion. By using UWB positioning technology, we are then able to calculate its position with centimeter accuracy. This solution is feasible and flexible for different types of application domains, but it is still required to install fragile components.

That is why we decided to go for computer vision. We took images from a security camera that was already in place onsite and retrained a people pose estimation model to recognize the ‘limb’ of an excavator.

The result is more stunning than a tracked ballet dancer. From a single image stream, we successfully reconstructed the exact 3D position of the excavator bucket. Having this in place makes it easy to log trajectories, feedback to an operator or build a GIS model of the displaced mass. Have a look at our proof-of-concept (on a non NDA sensitive video) just below in which we demonstrate this.