CopernNet : Point Cloud Segmentation using ActiveSampling Transformers

In the dynamic field of railway maintenance, accurate data is critical. From ensuring the health of ...

Published on: October 14, 2019

ETL Technology choices

In a previous article we introduced a number of best practices for building data pipelines, without tying them to a specific technology. Let’s see how this applies to several different technologies.

When building pipelines in Python (using Pandas, Dask, PySpark, etc.) we think that Dagster is worth considering. Dagster is based on a clear idea about best practices for writing quality data pipelines and we think that it shows. Among other things, it has:

In addition, we recommend spending enough time on automating your setup (using Anaconda, Pipenv, Docker, etc.).

Most graphical tools make it very easy to visualize how data flows through low-level operations (join, filter, map, etc.). Some tools also make it possible to assemble a number of low-level blocks into a higher-level operation. However, the possibilities of defining inputs and outputs for a reusable unit are limited, unless you resort to writing code. For instance, this page shows how to isolate reusable logic in SSIS using the GUI.

When you have the choice between a code-based development environment and a graphical design tool, go for the code based one. When using SAS, we prefer to write our own SAS code rather than using some point and click tool that will generate SAS code for us in the background. No matter how good the graphical tool is, we really think that being able to use a modern git-based concurrent development workflow is worth a lot.

Note that a lot of the code-based tools have a GUI that allows users to visualize a data flow. For instance, Dagster has dagit.

The size of master data is often measured in Gigabytes, rather than what we usually consider to be big data. So, why bother with the complexity of a system like Spark?

First of all, Apache Spark is an open source tool with a vibrant community. This has a few practical advantages. Not really the fact that it’s free: most customers are not really considering the cost of an ETL system in their decisions anyway. However, being open source comes with flexibility. We can deploy Spark wherever we want: on the laptop of our developers, on a server, on a cluster, in Docker containers. No licence keys to copy, no licence server to set up, the only thing we need is a JVM and enough RAM. But that doesn’t make Spark the only candidate. Commercial solutions with a flexible licence model might work just as well in that respect.

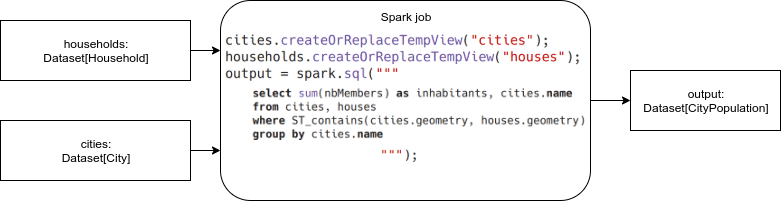

Secondly, Apache Spark is especially well suited to decouple the transformation logic and the data storing / loading. Apache Spark allows you to use multiple ways to interact / transform with your data, from object-oriented code to regular SQL, while abstracting away the data source. You can do a complex SQL query on any data frame or dataset. When the dataset was actually a table on a SQL server, Spark will use predicate pushdown to push filter operations to the SQL server, but when it was just a CSV file, Spark will take care of the filtering, transparently.

Apache Spark also offers a type-safe Scala API, which helps to prevent frequent errors. However, as we will see later, this is not perfect. Not the whole API is type-safe, and the Scala language (which is needed for the type-safe API) might scare away some people. An alternative would be to use PySpark, which hides away a lot of the underlying complexity, though it does reduce performance in some use cases.

A frequently heard complaint is the complexity of Spark. The complexity here is not really Spark itself (which is surprisingly simple to use, don’t let the big data buzzwords scare you away!) but the whole process of dimensioning, setting up and maintaining a Hadoop cluster. First of all, it is not the technology that mandates a cluster but the data. For master data, you might not need a cluster at all: Spark runs happily on a single machine and can scale out to a cluster later (e.g. when we mix transactional data with our master data, inflating our requirements). Also, the big cloud providers all offer managed cluster service, and bigger organizations often already have a Hadoop cluster set up.

For smaller data sets, the overhead compared to e.g. Python/Pandas will be measurable: Spark takes a while to start up and shut down, and also does query planning and code generation before launching an action. This will be measurable if the action is very small, and there is a fixed memory overhead. However, in most cases, this isn’t too much of a problem, as the overhead doesn’t grow when the datasets grow.

Subscribe to our newsletter and stay up to date.